- Hands-On Neural Networks with Keras

- Niloy Purkait

- 117字

- 2021-06-24 15:44:53

Cross entropy

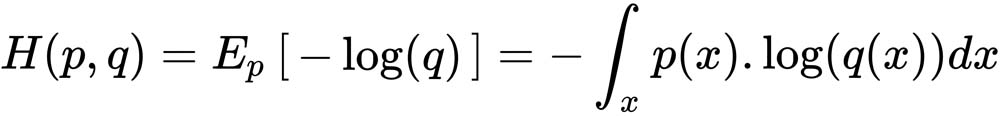

Cross entropy is yet another mathematical notion, allowing us to compare two distinct probability distributions, denoted by p and q. In fact, as you will see later, we often employ entropy-based loss function in neural networks when dealing with categorical features. Essentially, the cross entropy between two probability distributions (https://en.wikipedia.org/wiki/Probability_distribution), (p, q), over the same underlying set of events, measures the average number of pieces of information needed to identify an event picked at random from a set, under a condition; the condition being that the coding scheme used is optimized for a predicted probability distribution, rather than the true distribution. We will revisit this notion in later chapters to clarify and implement our understandings:

- 工业机器人虚拟仿真实例教程:KUKA.Sim Pro(全彩版)

- 零起步轻松学单片机技术(第2版)

- 7天精通Dreamweaver CS5网页设计与制作

- 乐高机器人EV3设计指南:创造者的搭建逻辑

- 数据库原理与应用技术学习指导

- C语言宝典

- 突破,Objective-C开发速学手册

- 网络管理工具实用详解

- Applied Data Visualization with R and ggplot2

- 嵌入式操作系统原理及应用

- 工业自动化技术实训指导

- R Data Analysis Projects

- 空间机器人智能感知技术

- Hands-On Business Intelligence with Qlik Sense

- Advanced Deep Learning with Keras